Neural Network

You don't have to possess a profound understanding of how neural networks work to effectively use Stonito Lotto.

Understanding a basic configuration options will suffice.

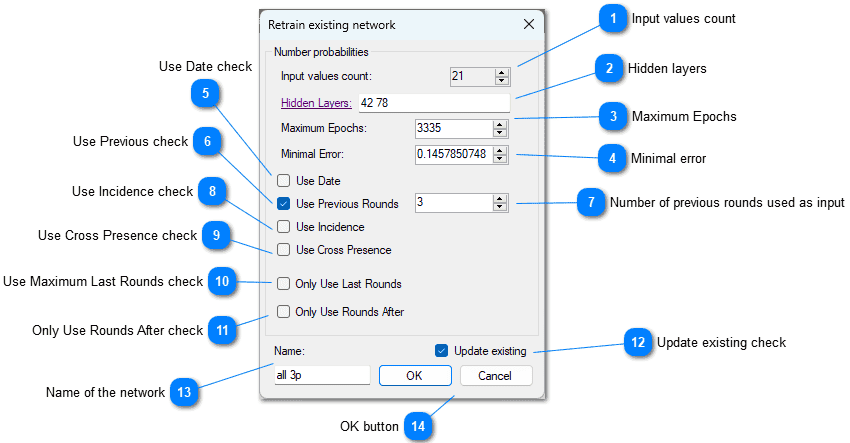

Input values count

Input values countThis value represents the number of inputs in the neural networks. This number is calculated based on the network settings and what types of input data it will use.

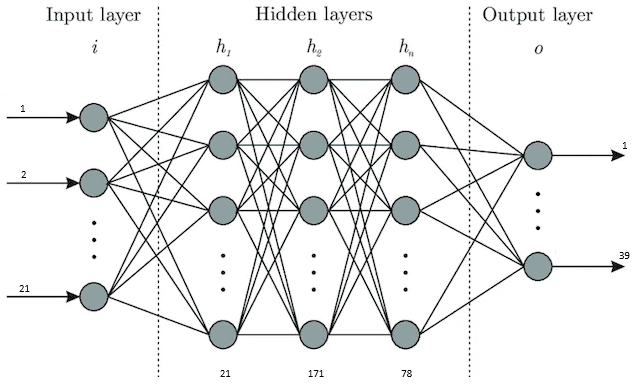

Hidden layers

Hidden layersEvery Neural Network has at least two layers: one is the input layer, and the second is the output layer. They are implied and not seen here. Hidden layers are the layers interconnecting those input and output layers. Each hidden layer is represented only by the number of nodes it comprises. Every number represents the hidden layer with a particular number of nodes, starting from the input layer. The more layers you add, the network will be more complex. A complex network needs more time to train but can catch more intricate relationships between input and output data.

Maximum Epochs

Maximum EpochsThis is related to the training of the network. When this number of epochs is reached the training is stopped. One epoch is similar to one generation.

Minimal error

Minimal errorThis is also related to the training. When the minimal error is reached, the training is stopped. The error doesn't converge to zero because the prediction is not a deterministic problem.

Use Date check

Use Date checkUse date of the drawing as in input

Use Previous check

Use Previous checkUse previous drawing numbers as inputs

Number of previous rounds used as input

Number of previous rounds used as inputDefines how many previous rounds are used as inputs for training and inference. For example, if the numbers pool is 39, value of 3 meaning that in training for every round three previous will be used as inputs, that makes 87 input values in total.

Use Incidence check

Use Incidence checkUse counts of each number is present in previous draws as inputs

Use Cross Presence check

Use Cross Presence checkUse table of mutual presence of pairs of numbers in all previous draws

Use Maximum Last Rounds check

Use Maximum Last Rounds checkHistory may be quite large, so including all the draws in training would lead to a lot of processing burden. It makes sense to limit the training to the last number of drawings. It's up to the user to find out the optimal number for a particular game.

Only Use Rounds After check

Only Use Rounds After checkSimilar as previous, but only limit the date after which the draws are considered. This date does not limit the number of drawings actually included in training otherwise.

Update existing check

Update existing checkIf checked, the new network will not be created, but the existing will be updated. Otherwise, new network will be created and current network will be saved.

Name of the network

Name of the networkThis text is used to identify the network in the list of trained networks for particular system. It is saved upon completing training process only.

OK button

OK buttonInitiate process of training network. It may take some time. During the process the current values of epoch and error are updated for each finished epoch.

You are advised to use multiple networks with various settings and keep track of how well they perform in the future games.

You can adjust them any time you want.

To add a new network to the particula game just uncheck the Update existing checkbox.

After the training is completed, newly created network will be selected as active.

You can opt to delete the selected network from main menu. Deleting network is necessary only if you want to decrease the number of networks. Otherwise you can easily update settings and name of the network and retrain it to replace existing network.

In the main menu there is also an option to Train all networks, which is used to retrain all the networks in a succession. The last that will be retrained is the network for patterns.

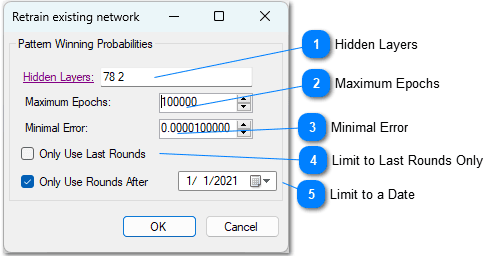

Neural Network for Pattern

Setting and training this network differs very little from the main neural network described in previous topic.

This network has less parameters and is much simpler to train and use.

Using this trained network you will be able to check any given combination in terms of how good it looks as a jackpot combination, based on previous draws.

Hidden Layers

Hidden LayersThe internal structure of neural networks represented by count of nodes in layers between input and output layers.

Maximum Epochs

Maximum EpochsThe training will finish when this number of epochs is reached.

Minimal Error

Minimal ErrorThe training will finish when the last error value is less or equal to this value.

Limit to Last Rounds Only

Limit to Last Rounds OnlyIf checked, the value entered limits the history draws used for training of the number to a last value rounds.

Limit to a Date

Limit to a DateIf checked, the set of history draws is limited only to a draws coming after a selected date.

Network Performance

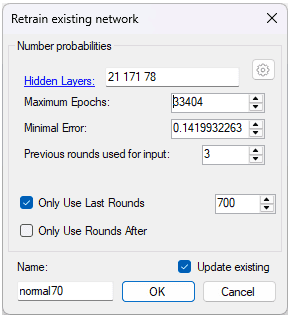

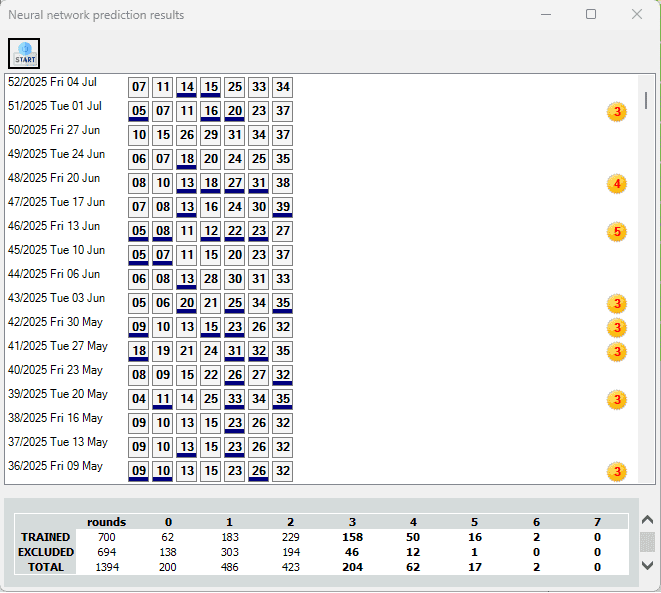

For an example system of Lutrija Srbije Loto 7/39, I trained the network using this setup.

Training Settings

- Number Prediction, network used for calculating probabilities of each number appearing in a next rounds, with predefined input values.

- Winning Pattern, network used to calculate similarity of any combination with previous winning combinations. Input is a combination, and output is a probability (0-1).

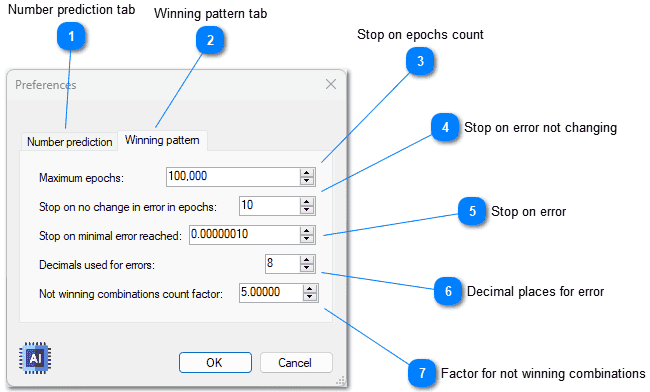

Number prediction tab

Number prediction tabThis tab page is for setting up the networks used for getting number prediction. Result of those network are the probabilities (0-1) for each number to appear in the next draw.

Winning pattern tab

Winning pattern tabThis is tab page for setting Winning Pattern Networks. They have the same settings as the Number Prediction Networks, except for the last (at the bottom) setting. The result of it is a similarity (0-1) of any combination to the previous winning combinations.

Stop on epochs count

Stop on epochs countThe training will stop regardless of minimal error if this number of epochs is reached.

Stop on error not changing

Stop on error not changingError changes on each epoch. The training will stop if the error representation in defined number of decimal places doesn't change in this count of epochs.

Stop on error

Stop on errorThe training will stop if the minimal error is reached, regardless of epoch count.

Decimal places for error

Decimal places for errorDefines how many decimal places are used to represent an error counted in every epoch.

Factor for not winning combinations

Factor for not winning combinationsUsed only for winning pattern network. It defines how many non-winning combinations are included in training set. For example, if you have 1000 combination in history draws, factor 2.0 means that the training set will include 2000 non-winning random combinations and 1000 winning. The factor 2.5 would make for 2500 non-winning combinations.